TEEs and the Blockchain

What place do Trusted Execution Environments (TEEs) have in blockchain projects?

What Exactly Are TEEs?

Before we can discuss the application of TEEs for blockchain related programs, we first have to establish what Trusted Execution Environments (TEEs) even are.

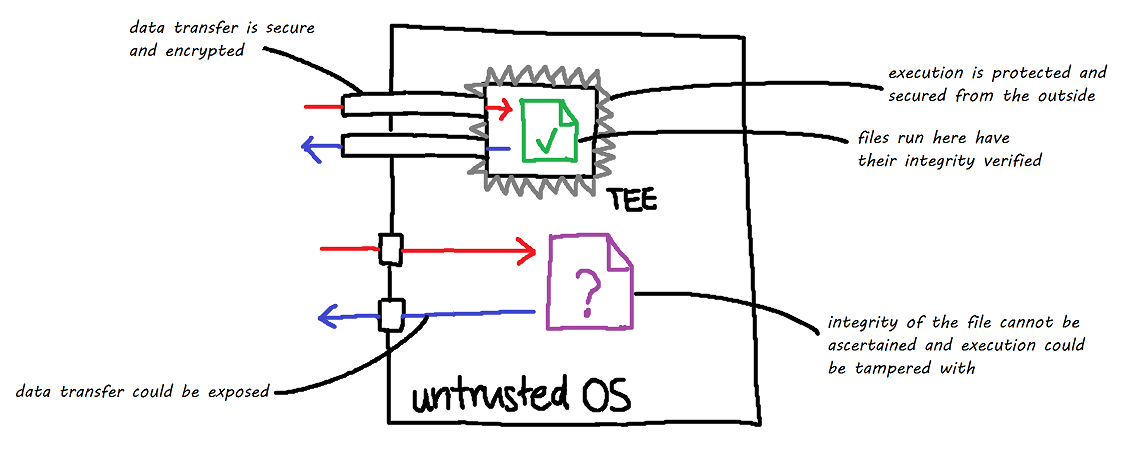

At a high level, TEEs can be described as an isolated processing environment found on the CPU, a sort of separate secure area where whatever code and data enters remains confidential and processes outside of a TEE cannot read or tamper with the data within it. When a program executes inside a TEE, it is processed in the clear, but when anything outside of the TEE tries to view it, all it will see is some encrypted data. These private regions of memory are known as enclaves.

By running code in this isolated, tamper-resistant processing environment, it "guarantees the authenticity of the executed code", where a mechanism called remote attestation can be utilized to prove its trustworthiness to a third-party and untrusted code should not be able to interfere with the execution of a program within the TEE.

For further reading: "Trusted Execution Environment: What It is, and What It is Not", Sabt et al.

Remote Attestation

Part of the "trust" part of the Trusted Execution Environment is trust that the program executed within the TEE remains untampered and unaffected by any external processes, e.g. the program cannot enter an illegitimate state due to a separate process attempting to modify the registers used in computation.

As previously mentioned, TEEs provide a mechanism called remote attestation, a means to verify the authenticity and integrity of a program's execution, which is how we establish that system of trust. Essentially, the TEEs generate cryptographically signed evidence, which is basically a report that details:

The hardware the program was being run on

The software that was run, represented through, say, hashes, checksums, etc.

System configuration information

etc.

Then, this evidence is signed using hardware keys baked into hardware itself, be it the CPU the TEE is running on or some tamper-resistant hardware e.g. a Trusted Platform Module (TPM, i.e. that thing Windows 11 and Riot Vanguard keep bugging you for). which make up a root of trust.

The confidentiality and integrity of data (i.e. the lack of external interference in a program's state) are more or less an extension of the CPU's security features themselves: your Intel SGX, AMD SEV, Arm TrustZone etc.

You can read more about remote attestations in far more technical detail here.

Verifiable Compute?

So, do TEEs enable verifiable compute?

Sort of...? It'd be more accurate to call it trustable compute.

What we have to take note of is that TEE evidence aren't (by themselves) cryptographic proofs! The evidence is trusted as it is signed by a bunch of keys that serve to guarantee hardware trustworthiness, and for most intents and purposes, we can trust that a program executed within a TEE is what we expect it to be — you wouldn't be able to run a different program or a modified version of a program within a TEE and produce a valid set of attestations corresponding to that program. However, do note that at a foundational level, this does not mean that we can verify the input of the program.

This is because the input to a TEE program still comes from the outside, untrusted area.

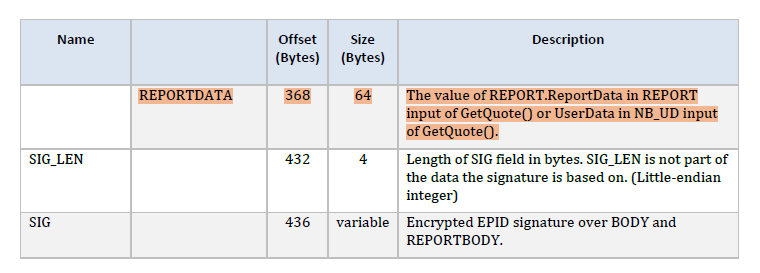

To quote the Intel SGX Developer guide:

"There is no guarantee that the input parameters of a call into an enclave (ECall) or the return parameters from a call outside an enclave (OCall) will be what the enclave expects because the untrusted domain supplies them."Regardless, referencing the SGX specification, you can create a signed report that says that the program was run with a given input:

Even with this available, do remember that signatures are not proofs! So a fundamental problem that has to be addressed is that although TEEs can prove that a program was run correctly, it cannot inherently prove that the program was run with the correct input, although it can provide some signed evidence that it was run with the input which you can choose to assume as sufficient. This reduces your security model to trust in the hardware signatures rather than hard, verifiable cryptographic proofs.

If you want to actually prove that the output corresponds to a supplied input, you would then have to make use of, well, cryptographic proofs of some form (like ZKPs!). In which case, you're basically back to square one...

For further reading: "Attestation Mechanisms for Trusted Execution Environments Demystifi ed", J. Menetrey et al

What Could TEEs Be Good For?

From what we've established above, TEEs are not some silver bullet for arbitrary Proof of Compute. Rather, it's primary use case is as a privacy preservation method, where data confidentiality and integrity during runtime can be ensured. Upholding program integrity also opens up a use case for trustable (but not intrinsically provable!) computing.

This brings us to some obvious benefits that TEEs would bring about for certain blockchain related operations:

Protection of private keys: if your private keys and operations performed using private keys exist entirely within a TEE, then it would secure it against a potential malicious actor. This can be applied to, say, crypto wallets, where all your crypto signatures are performed within the TEE, preventing malware on your computer from gaining access to the private key by looking for it in memory or something. However, this also means that to maintain such a security model, the private key cannot leave the TEE, which means that you, as an end-user, also won't have access to it. Yikes.

Prevention of tampering with crypto related software: Similar to the above example, we can theorize that, say, a malicious program attempts to inject code into Metamask or something to drain your crypto wallets. If Metamask were to operate within a TEE, then this simply wouldn't be able to happen since the program's integrity would be upheld. Yipee!

Off-chain compute: We can also consider the use of TEEs for off-chain computation, such as in the case of oracle-like networks (think of Ritual). If we have off-chain computation tasks in which the complexity required to construct, say, a ZKP is far too high, a TEE would make for a decent alternative to ensure that programs are run as intended off-chain before having results published on-chain. Your "proof of compute" is substituted with hardware signatures now, but this is still a step up from most of what we have currently. Phala Network is a project that hinges on this principle.

Private states and confidential computations*: This is meant to be the core benefit that TEEs provide. This can be relevant to privacy oriented projects (think of Monero and Penumbra) where you'd want to hide, say, transaction sizes or trade directions (think of, erm, tumblers. Research into an implementation of a BTC mixer based on TEEs exists). However, do note the asterisk next to this point, as there's more to discuss. After all, TEEs are not infallible...

Looking at projects that have leveraged TEEs (relevant Medium article here), we get a few more ideas:

$TOKI makes use of Intel SGX enclaves to perform "light client verification", whereby the program that nodes run will be executed with an enclave for there to be trusted execution, and then this program produces cryptographic proofs that are then utilized in an MPC implementation. This makes some sense as you can mess with the program states during MPC to produce erroneous results, and the use of TEE here would produce more resilient programs.

$TAIKO makes use of an SGX verifier, where ECDSA signatures are checked within a TEE. Once again, this is to ensure the legitimacy of a computation — this one is a bit goofy as the computation itself should already be cryptographically verifiable, and use of TEE here is just to make sure that the computation goes as intended.

$ROSE (Oasis Protocol) makes use of SGX to perform confidential execution of smart contracts, with multiple layers of encryption and decryption before and after execution, where node operators and devs would be barred from reading the data that is being used in execution.

What Aren't TEEs Good For?

To address an elephant in the room: no, you can't use TEEs to get "100% verifiable AI compute". In theory, you could get private LLM interactions (where the prompts passed to the LLM are in some way hidden from the node operator, which would be a huge plus to privacy). However, TEEs, for now, (primarily) only exist for CPUs. GPU TEEs are in active development (see: NVIDIA's Hopper and Blackwell architectures), but this is still very much out of reach to most consumers and by extension, node operators. (Although, with rapid developments in model quantization, we could be running pretty fucking powerful models purely on a CPU real soon).

Attestations are not standardized, so you'd be forcing node operators to be running similar hardware that make use of the same TEE implementation (e.g. all nodes having to run Intel Xeon chips)

TEEs, by virtue of not being bulletproof, probably aren’t the solution to private states and confidential computation... Wait, didn't I just say TEEs could be used for just that?

TEEs Can Be (And Have Been) Broken

Just to throw my two cents in, the "reliance on centralized infra/single trusted party" in the form of the hardware manufacturer responsible for the TEE isn't really the most compelling argument against the use of TEEs?

I know we're all big fans of open source, sticking it to Big Tech and transparency, but Intel going out of their way to compromise their TEE implementation to screw with your blockchains isn't really the boogeyman we're trying to pin here (and if you want to make that argument, then you'd have to argue CPUs are already backdoored to begin with. See: Intel ME, AMD Secure Technology).

What is a fair argument is that TEEs have been broken before, so if your "privacy preserving application" is entirely reliant on the TEEs working as intended, you're sort of setting yourself up to eventually get pwned. A good resource can be found at sgx.fail, which details instances of real life deployments of Intel SGX leading to privacy failures, with the most relevant example being how the Secret Network was compromised.

In the case of $SCRT, there was an over-reliance on TEEs working as intended to the point that all nodes shared the same private key derived from a single consensus seed for operations. So if you could break one TEE (by lifting hardware keys off the hardware itself to forge attestations), you can trivially decrypt other messages. If your entire security model and means of proofs in the network relies on TEEs, you're setting yourself up for a bad, bad time.

TEE based projects thus need a recovery plan for when TEEs break, which Intel dubs TCB Recovery, but if TEEs were the only line of defense for your network's security, then it's a bloody death sentence. And there are many ways for TEEs to break (see: SGAxe attack, Foreshadow attack, Downfall vulnerability, ÆPIC Leak) and undeniably more to come in the future.

As for using TEEs for trustable off-chain compute… well, if you're able to produce forged attestations, great job! You've accomplished one (1) node capable of sybilling on the network, enjoy farming free money off of it until you get blacklisted from the network. The impact is not as catastrophic as a complete compromise of the network, as described above, which does lend us more optimism towards using TEEs for this purpose.

So, Do TEEs Have A Place In The Blockchain?

Well, TEEs are good for keeping wallets safe and privacy preservation operations, that is until they, ye'know, stop being privacy preserving. Perhaps you can make use of TEEs for some level of trustable (again, emphasis on the use of "trust" rather than "verify") off-chain computations, where in an age of everyone wanting to somehow pipe LLM outputs on-chain or create decentralized AI inference marketplaces, may prove useful.

Here's a nice paper that covers some of the points raised here in further depth as well as other, more technical security considerations that I’ve glossed over: "Lessons Learned from Blockchain Applications of Trusted Execution Environments and Implications for Future Research", Karanjai et al.

So, there are uses for TEEs in the blockchain, but these applications simply aren't the one-shot-kill people are hoping for where we can abandon actual cryptography (the part that cryptocurrencies are named after) in favor of just running everything in a TEE and saying the attestations are all good. All in all, don't hype up a project simply because it uses some TEE/SGX related implementation, because it's probably (and hopefully!) just a small part of its actual security model.